Why Grokipedia Will Matter for AI

Cheaper, faster AI training—at the cost of ideological fragmentation. How AI-curated data could slash training costs while polarizing the models we build.

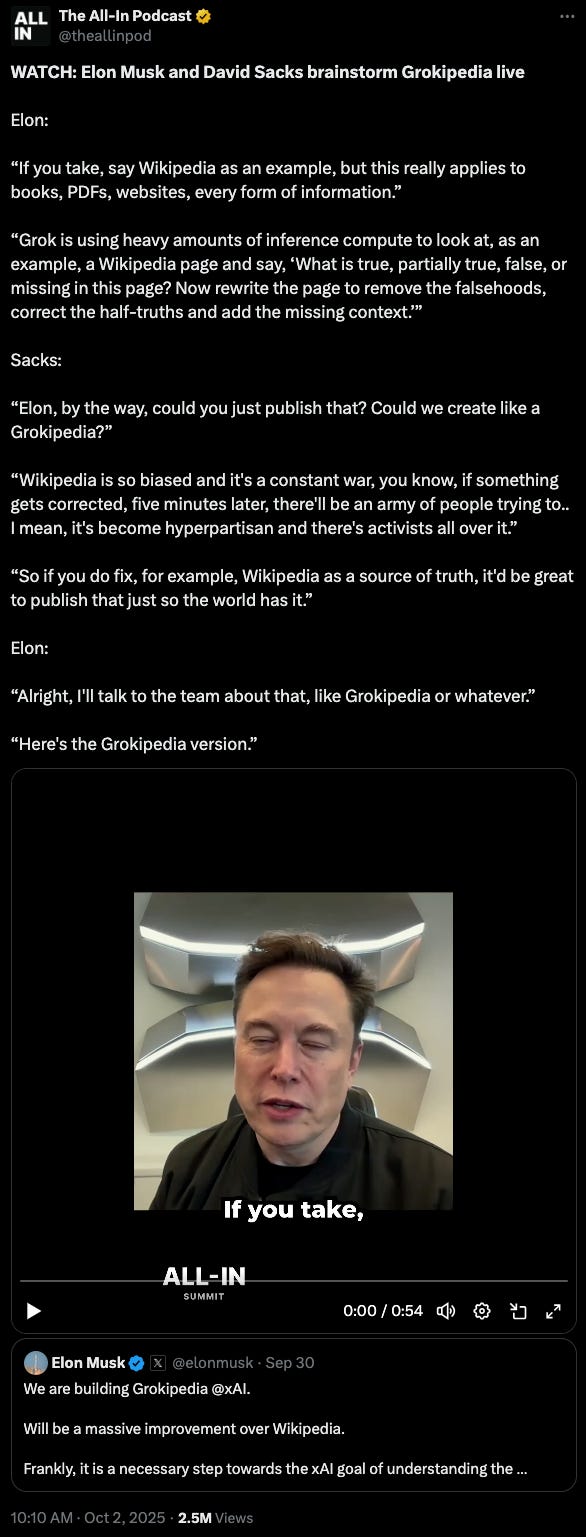

Recently, Elon Musk appeared on the All-In Podcast Summit in September 2025, where a spontaneous conversation with co-host David Sacks sparked one of the most ambitious knowledge curation projects in recent memory.

During this live brainstorming session, Musk was explaining how his AI chatbot Grok processes and analyzes information across multiple formats—from Wikipedia pages to books, PDFs, and websites—using “heavy amounts of inference compute” to determine what is “true, partially true, false, or missing” before rewriting content to “remove the falsehoods, correct the half-truths and add the missing context.”

Sacks, in response, interjected with a question:

“Elon, by the way, could you just publish that? Could we create like a Grokipedia?”

Sacks argued that fixing Wikipedia, which has “activists all over it,” as a source of truth would provide immense value to the world.

Musk’s characteristically direct response—”Alright, I’ll talk to the team about that, like Grokipedia or whatever”—has set in motion what he now describes as “a massive improvement over Wikipedia” and “a necessary step toward the xAI goal of understanding the Universe,” with the platform’s early beta launching just weeks after this impromptu conversation was captured on video.

Source: https://x.com/theallinpod/status/1973782724075806982

Recent Wikipedia Context

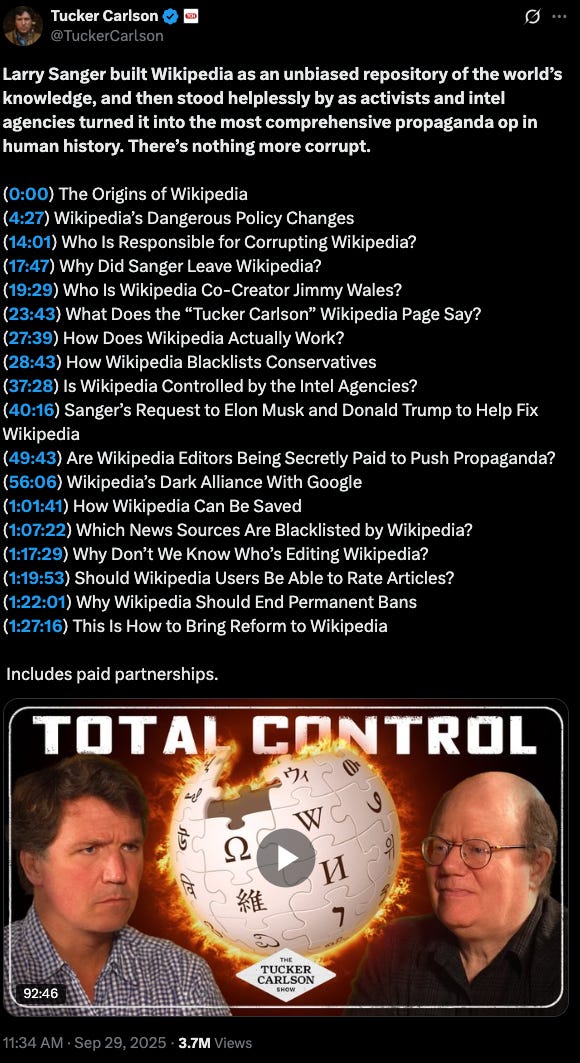

Elon’s comments come on the heels of Wikipedia co-founder Larry Sanger going on the Tucker Carlson Show in late September for a comprehensive 93-minute interview about Wikipedia.

During the interview, the Wikipedia co-founder proposed a “Nine Theses” set of reforms to Wikipedia including: abolish source blacklists; end decision‑making by bare “consensus” in contentious areas; allow competing parallel articles; revive earlier neutrality standards; repeal “ignore all rules” as a governing crutch; enable public article ratings; end indefinite blocks; adopt a more legislative, transparent rulemaking process; and disclose leadership identities and roles.

Source: https://x.com/TuckerCarlson/status/1972716529608237173

LLMs’ Data Source Challenge:

The core challenge in building LLMs boils down to sourcing high-quality, unbiased training data at immense scale.

Models like GPT-4 ingest over 13 trillion tokens from noisy sources like Common Crawl and Wikipedia, which even after filtering contain errors and biases. Research on Wikipedia, for example, has shown that the site suffers from uneven global coverage, ideological skew, and domain-specific error rates. Source1. Source2. Source3.

To battle this effect, LLMs use sophisticated probabilistic filtering and human-AI collaboration to extract signal from noise, employing multi-stage data curation pipelines that apply heuristic filtering, classifier-based quality scoring, and constitutional AI principles. However, these approaches still leave models vulnerable to systematic biases and sophisticated misinformation campaigns that manual human oversight cannot catch at the trillion-token scale required for modern AI systems.

The fallout? LLMs don’t merely ingest errors; they perpetuate deep-seated distortions about whose knowledge counts, which viewpoints prevail, and what qualifies as “authoritative”—flaws that snowball as synthetic content floods the web, risking a catastrophic “model collapse” where AIs endlessly recycle incorrect views from their predecessors and data sources.

If Grokipedia were to yield measurable gains in truthfulness and internal consistency over Wikipedia, the downstream effect for LLMs could be a meaningful improvement in training efficiency. Studies on data curation have shown that when models are trained on carefully filtered, high-quality subsets, they can achieve equivalent or superior performance with dramatically less compute—often cutting training costs by roughly half and, in some fine-tuning contexts, by as much as 90%.

A Grokipedia-style corpus that mitigates Wikipedia-style weaknesses could, in effect, offer the language-model equivalent of higher-octane fuel—cleaner, denser, and more efficient for large-scale reasoning.

Underpinnings - Information Theory in AI:

A key insight for all LLM training is that LLMs function as information compression systems.

This means that academic research around information-theoretic quality measures is naturally applicable to data curation.

While these methods require higher upfront computational investment for data analysis, they generate substantial long-term savings through faster training, smaller datasets, and superior model performance—fundamentally changing the economics of LLM development from linear scaling costs to front-loaded investment with diminishing marginal costs.

This represents a crucial evolution in AI development, where mathematical rigor in data selection replaces brute-force approaches, making advanced LLM training more accessible and cost-effective.

For reference, in the early years of information theory, Claude Shannon wrote about the fundamental challenge of transmitting reliable information through noisy channels—a problem that parallels today’s struggle to train AI systems on unreliable data sources.

Recent research has shown that applying information theory principles to LLM training data selection can simultaneously improve model performance and slash training costs. Studies like CLUES, D4, and the “Entropy Law” demonstrate that carefully curated datasets using Shannon entropy, compression ratios, and gradient-based influence measures can achieve up to 67% performance improvements while reducing training data requirements by orders of magnitude. Source.

Moreover, Google has recently shown a 10,000x data reduction with maintained performance, which exemplifies this paradigm shift from “more data is better” to “better data is better.” Source.

The Echo Chamber Effect—Polarized LLMs?

With the impending launch of Grokipedia, users might face a choice in LLM usage that mirrors their selection of a news source.

Just as individuals gravitate toward news outlets like Fox News or MSNBC that reinforce their preexisting political ideologies, LLM users might soon find themselves increasingly selecting an LLM based on perceived alignments with their own beliefs.

Conservative users, frustrated with what they view as “woke” censorship in models like ChatGPT or Claude, may flock to alternatives like Grok for its purported emphasis on unfiltered truth-seeking, while liberals might prefer systems that prioritize equity and progressive narratives, further entrenching societal divisions through algorithmically curated confirmation bias.

Conclusion:

What started as an impromptu exchange between Musk and Sacks and a request that if Grok was having to rework large sets of Wikipedia, then that by-product would be a potential rival and improvement to Wikipedia and should be released to the public, could easily result in a massive improvement for the entire AI space.

Moreover, Grokipedia represents more than a Wikipedia alternative; it’s a test case for whether AI-curated knowledge bases can genuinely outperform human-edited ones at scale. In doing so, it will likely reinforce information theory principles from academic papers into production systems that will move all of AI forward.

But the public should be aware. The strategic advantage of controlling the training data supply chain for xAI and the cost savings in training time it represents could easily come at the expense of driving LLMs into a politicized space, similar to traditional news media.

If you haven’t already, now is probably the time to start watching your LLM news diet.

Until next time.

-Jack